training: Python boolean indicating whether the layer should behave in.Should be utilized, while a False entry indicates that theĬorresponding timestep should be ignored. mask: Binary tensor of shape indicating whetherĪ given timestep should be masked (optional, defaults to None).Īn individual True entry indicates that the corresponding timestep.Unrolling can speed-up a RNN,Īlthough it tends to be more memory-intensive. If True, the network will be unrolled,Įlse a symbolic loop will be used. However, most TensorFlow data is batch-major, so byĭefault this function accepts input and emits output in batch-major Using time_major = True is a bit moreĮfficient because it avoids transposes at the beginning and end of the If True, the inputs and outputs will be in shape time_major: The shape format of the inputs and outputs tensors.Sample at index i in a batch will be used as initial state for the sample Sequence backwards and return the reversed sequence. go_backwards: Boolean (default False).Whether to return the last state in addition to the Whether to return the last output in the output Fraction of the units to dropįor the linear transformation of the recurrent state. recurrent_dropout: Float between 0 and 1.bias_constraint: Constraint function applied to the bias vector.recurrent_constraint: Constraint function applied to the.kernel_constraint: Constraint function applied to the kernel weights.

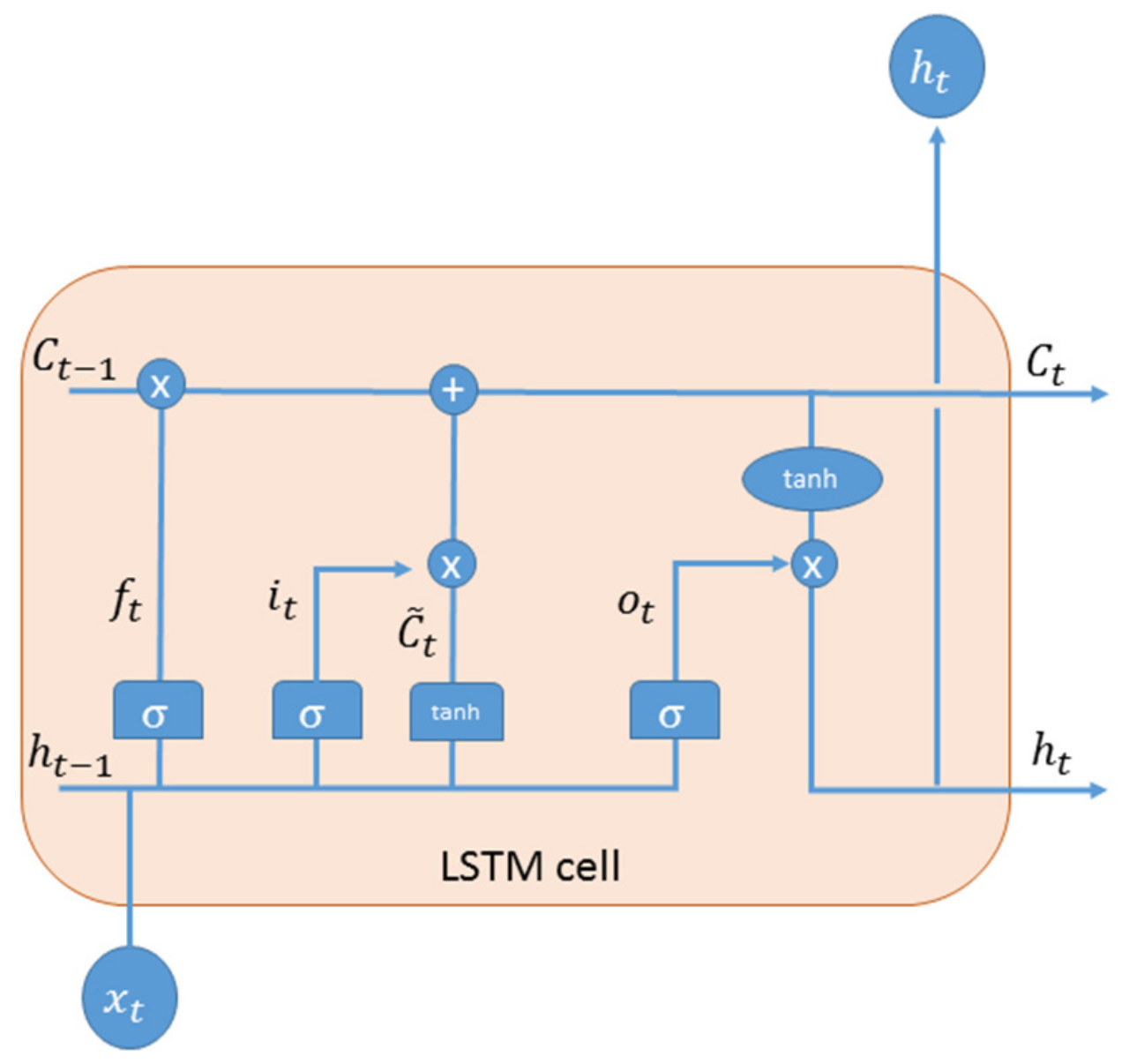

activity_regularizer: Regularizer function applied to the output of the.bias_regularizer: Regularizer function applied to the bias vector.recurrent_regularizer: Regularizer function applied to the.kernel_regularizer: Regularizer function applied to the kernel weights.Setting it to true will also forceīias_initializer="zeros". unit_forget_bias: Boolean (default True).bias_initializer: Initializer for the bias vector.Matrix, used for the linear transformation of the recurrent state. recurrent_initializer: Initializer for the recurrent_kernel weights.kernel_initializer: Initializer for the kernel weights matrix, used for.use_bias: Boolean (default True), whether the layer uses a bias vector.If you pass None, no activation isĪpplied (ie. recurrent_activation: Activation function to use for the recurrent step.ĭefault: sigmoid ( sigmoid).activation: Activation function to use.ĭefault: hyperbolic tangent ( tanh).units: Positive integer, dimensionality of the output space.shape ) ( 32, 4 ) > print ( final_carry_state. shape ) ( 32, 10, 4 ) > print ( final_memory_state. LSTM ( 4, return_sequences = True, return_state = True ) > whole_seq_output, final_memory_state, final_carry_state = lstm ( inputs ) > print ( whole_seq_output.

LSTM ( 4 ) > output = lstm ( inputs ) > print ( output.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed